HDFS分层存储配置并使用(二)

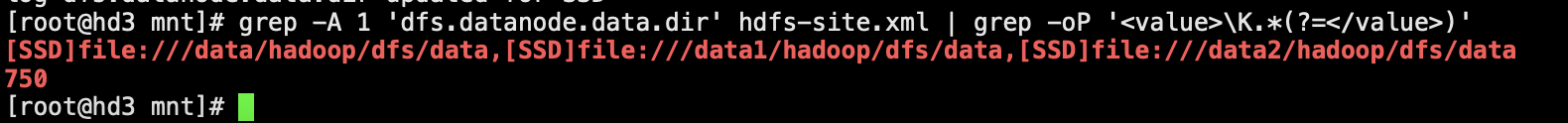

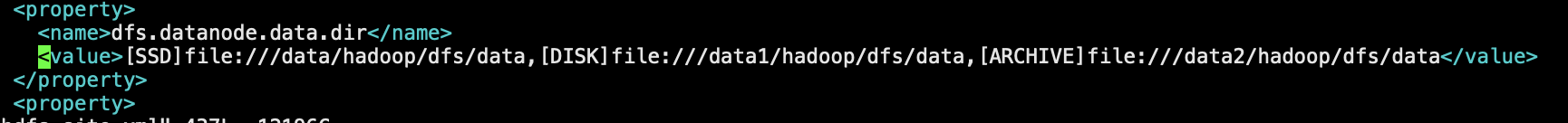

修改DataNode数据目录,将1块SSD盘设置为SSD,1块设置为DISK,1块设置为ARCHIVE

<property> <name>dfs.datanode.data.dir</name> <value>[SSD]file:///data/hadoop/dfs/data,[DISK]file:///data1/hadoop/dfs/data,[ARCHIVE]file:///data2/hadoop/dfs/data</value> </property>

1.测试使用SSD存储,执行wordcount

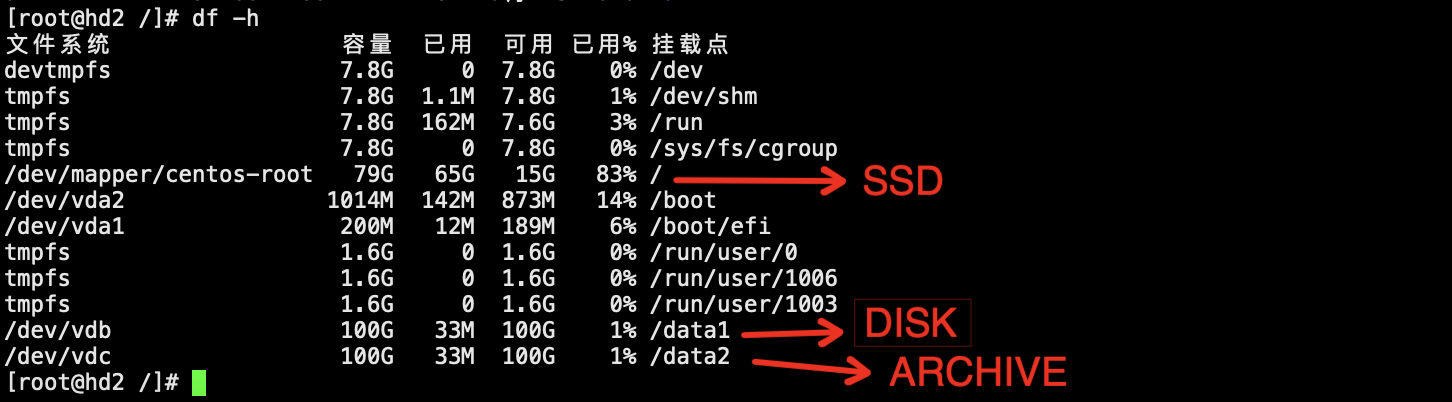

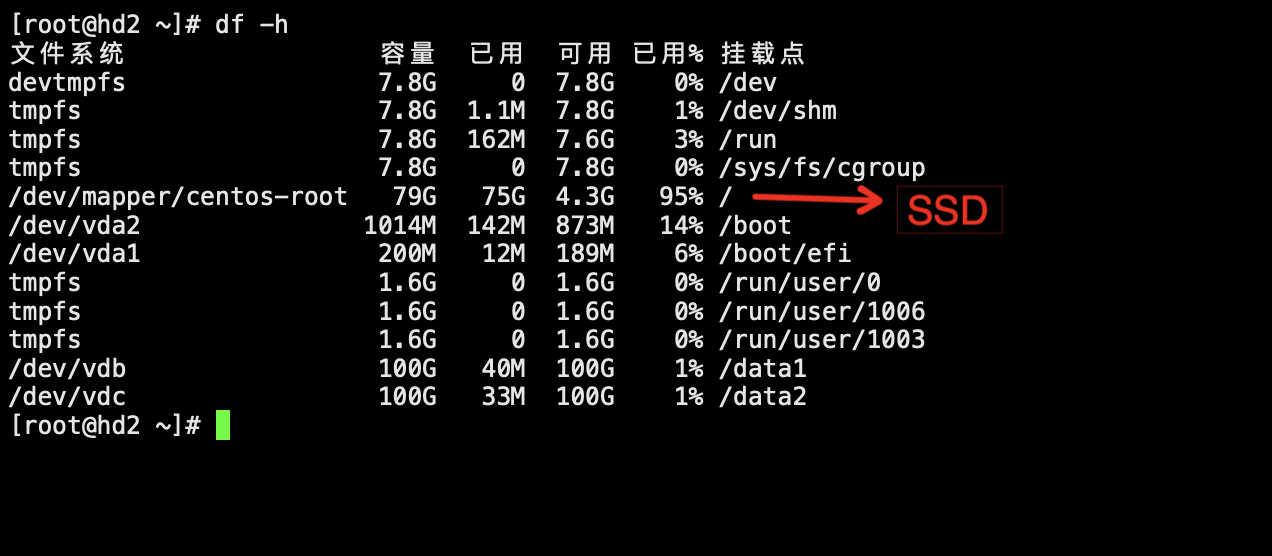

未提交作业前磁盘空间的容量

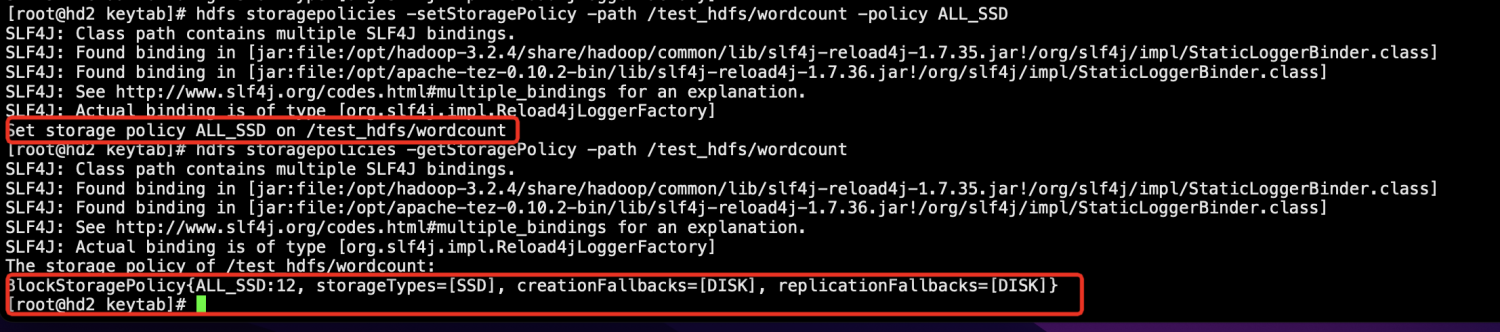

设置提交wordcount任务的HDFS数据目录的策略为ALL_SSD

[root@hd2 keytab]# hdfs dfs -mkdir -p /test_hdfs/wordcount

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

[root@hd2 keytab]# hdfs storagepolicies -setStoragePolicy -path /test_hdfs/wordcount -policy ALL_SSD

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

Set storage policy ALL_SSD on /test_hdfs/wordcount

[root@hd2 keytab]# hdfs storagepolicies -getStoragePolicy -path /test_hdfs/wordcount

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

The storage policy of /test_hdfs/wordcount:

BlockStoragePolicy{ALL_SSD:12, storageTypes=[SSD], creationFallbacks=[DISK], replicationFallbacks=[DISK]}

[root@hd2 keytab]#

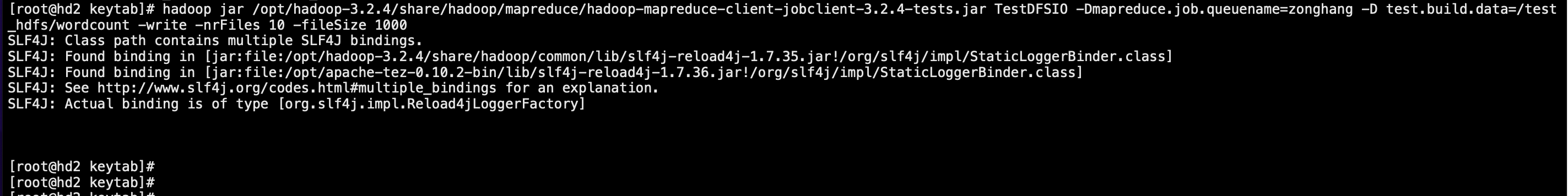

执行生成数据的脚本,生成10GB测试数据

[root@hd2 keytab]# hadoop jar /opt/hadoop-3.2.4/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-3.2.4-tests.jar TestDFSIO -Dmapreduce.job.queuename=zonghang -D test.build.data=/test_hdfs/wordcount -write -nrFiles 10 -fileSize 1000 SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

生成数据后查看磁盘,只有SSD容量增长了

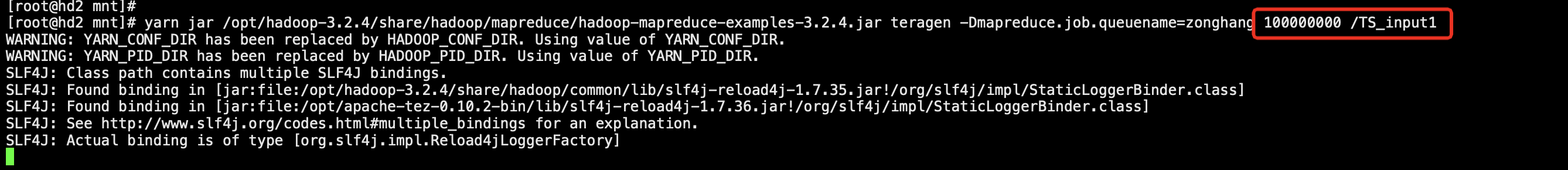

提交wordcount任务,生成10G数据

[root@hd2 mnt]# yarn jar /opt/hadoop-3.2.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.4.jar teragen -Dmapreduce.job.queuename=zonghang 100000000 /TS_input1 WARNING: YARN_CONF_DIR has been replaced by HADOOP_CONF_DIR. Using value of YARN_CONF_DIR. WARNING: YARN_PID_DIR has been replaced by HADOOP_PID_DIR. Using value of YARN_PID_DIR. SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

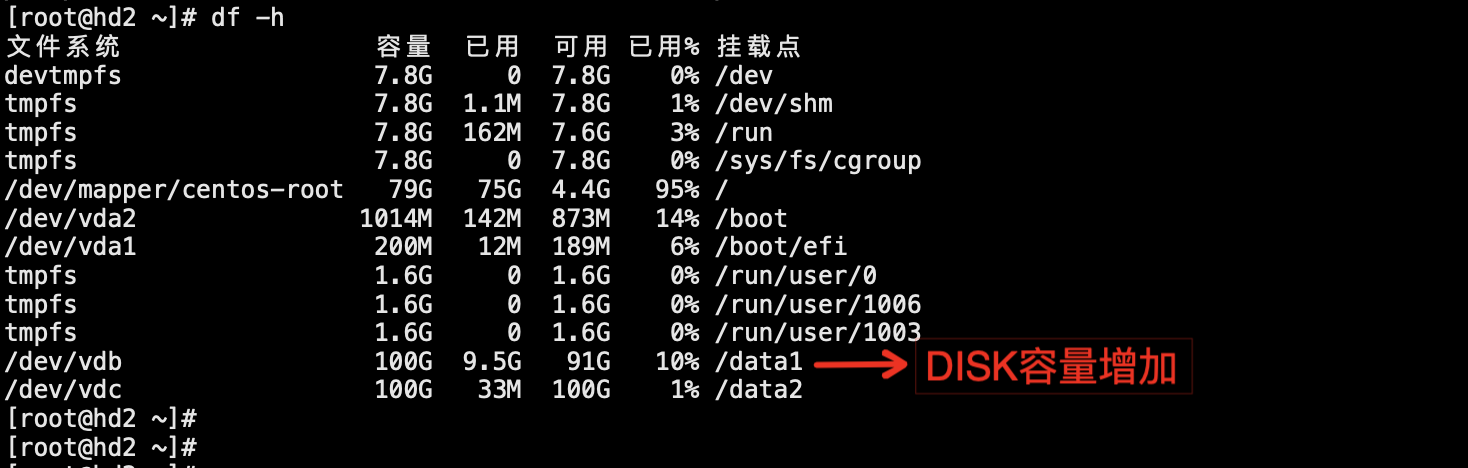

wordcount任务完成后查看磁盘,由于wordcount在执行过程中产生的中间数据落磁盘的目录未指定存储策略,所以默认使用hot策略,因此造成DISK存储的目录数据量有增长

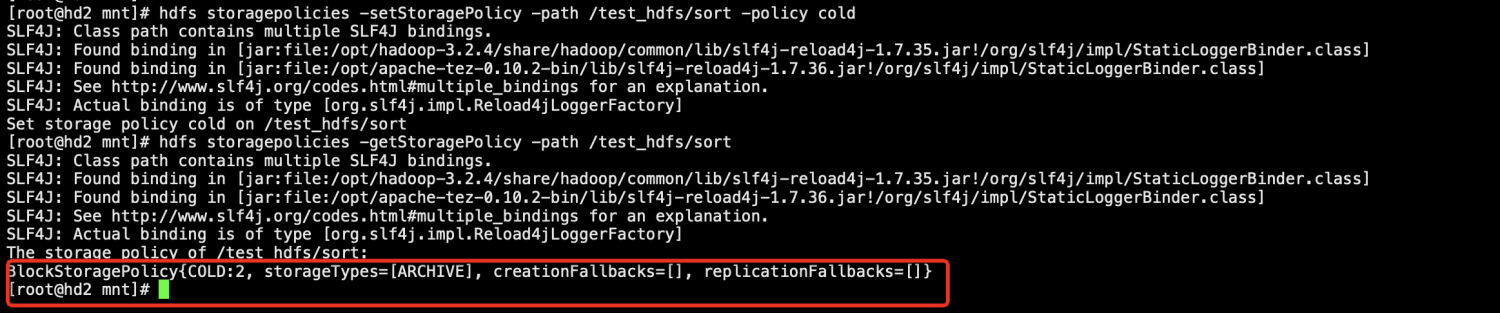

2.测试使用ARCHIVE存储,执行sort

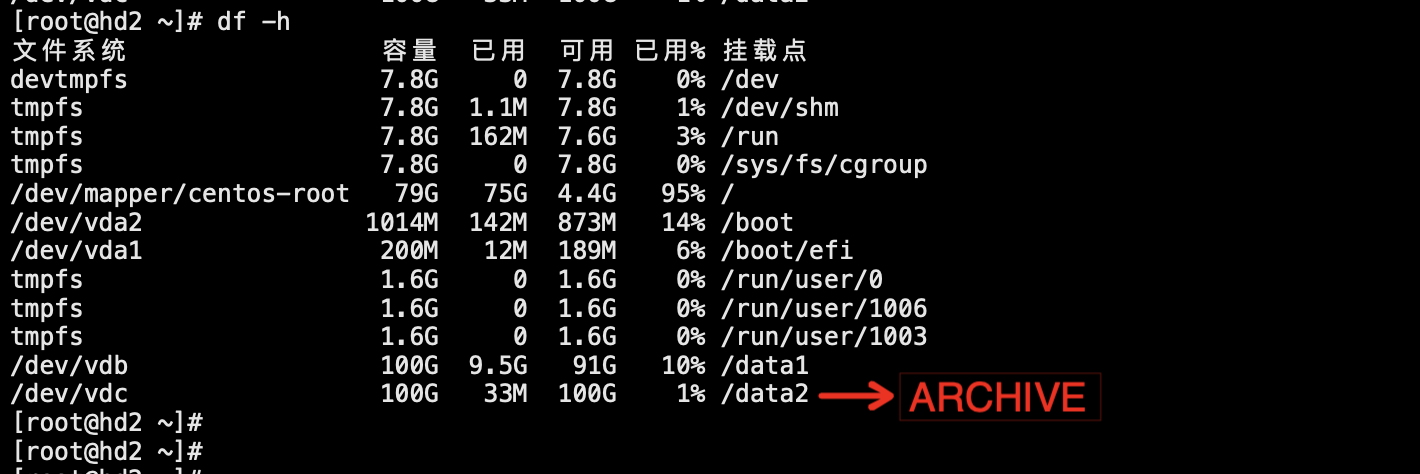

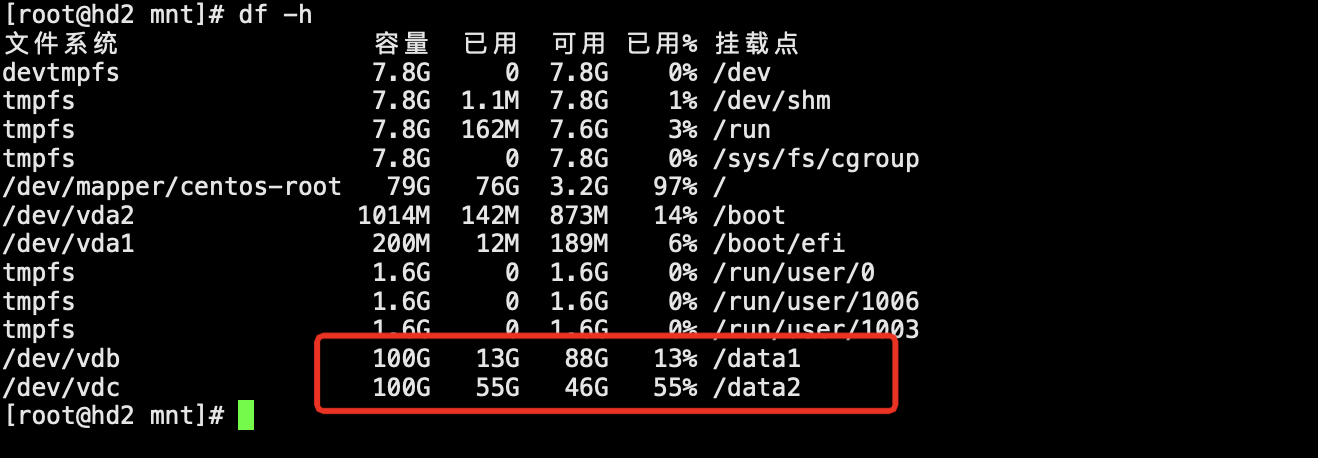

未提交作业前磁盘空间的容量

设置提交sort任务的HDFS数据目录的策略为cold

[root@hd2 mnt]# hdfs storagepolicies -setStoragePolicy -path /test_hdfs/sort -policy cold

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

Set storage policy cold on /test_hdfs/sort

[root@hd2 mnt]# hdfs storagepolicies -getStoragePolicy -path /test_hdfs/sort

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

The storage policy of /test_hdfs/sort:

BlockStoragePolicy{COLD:2, storageTypes=[ARCHIVE], creationFallbacks=[], replicationFallbacks=[]}

[root@hd2 mnt]#

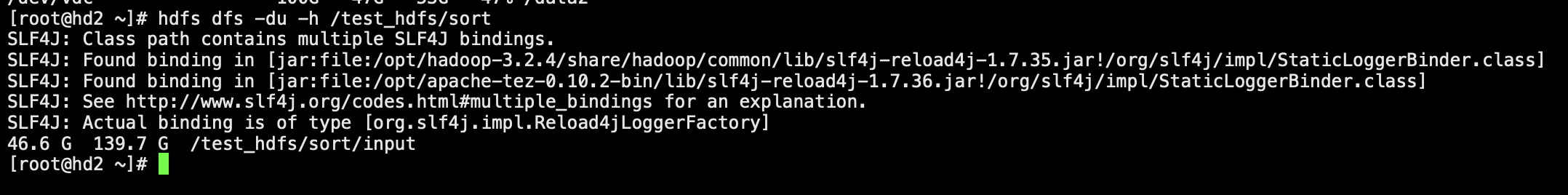

执行生成数据的脚本,生成50GB测试数据

[root@hd2 mnt]# yarn jar /opt/hadoop-3.2.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.4.jar teragen -Dmapreduce.job.queuename=zonghang 500000000 /test_hdfs/sort/input WARNING: YARN_CONF_DIR has been replaced by HADOOP_CONF_DIR. Using value of YARN_CONF_DIR. WARNING: YARN_PID_DIR has been replaced by HADOOP_PID_DIR. Using value of YARN_PID_DIR. SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

执行生成数据的脚本,生成50GB测试数据

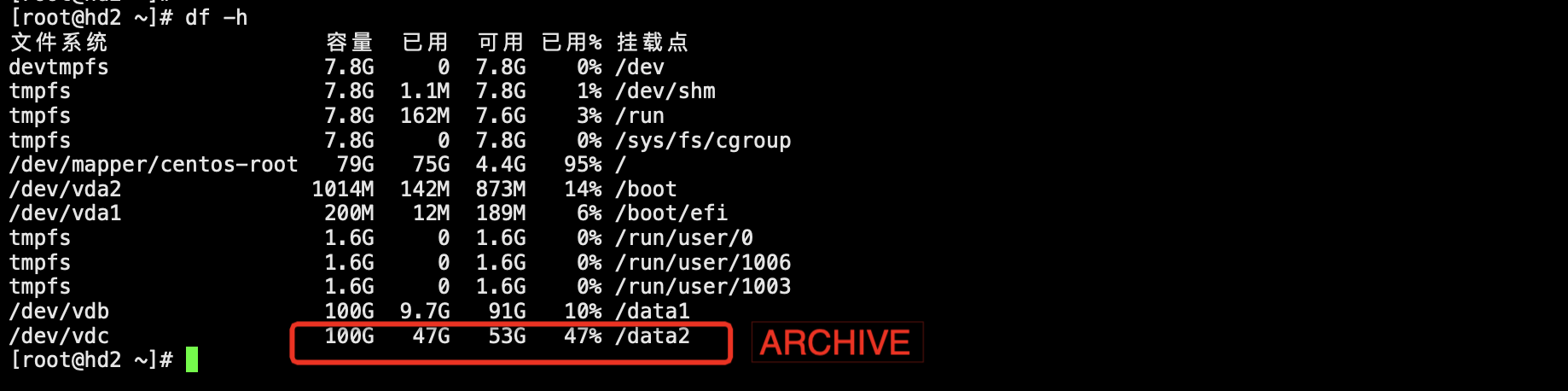

生成数据后查看磁盘,只有ARCHIVE类型的磁盘容量增长了

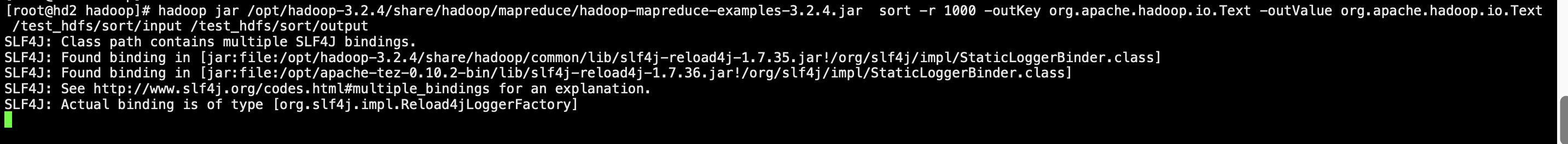

提交sort任务

[root@hd2 hadoop]# hadoop jar /opt/hadoop-3.2.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.4.jar sort -r 1000 -outKey org.apache.hadoop.io.Text -outValue org.apache.hadoop.io.Text /test_hdfs/sort/input /test_hdfs/sort/output

sort任务完成后查看磁盘,由于sort在执行过程中产生的中间数据落磁盘的目录未指定存储策略,所以默认使用hot策略,因此造成除了ARCHIVE存储的目录增长了之外,DISK存储的目录数据量也有增长

3.测试使用DISK存储,执行terasort

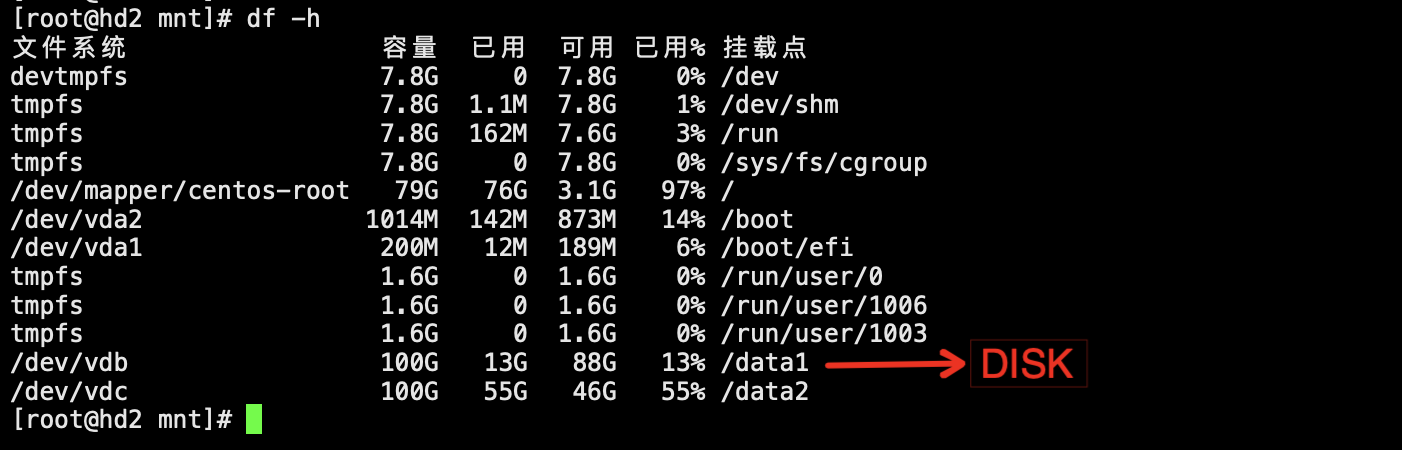

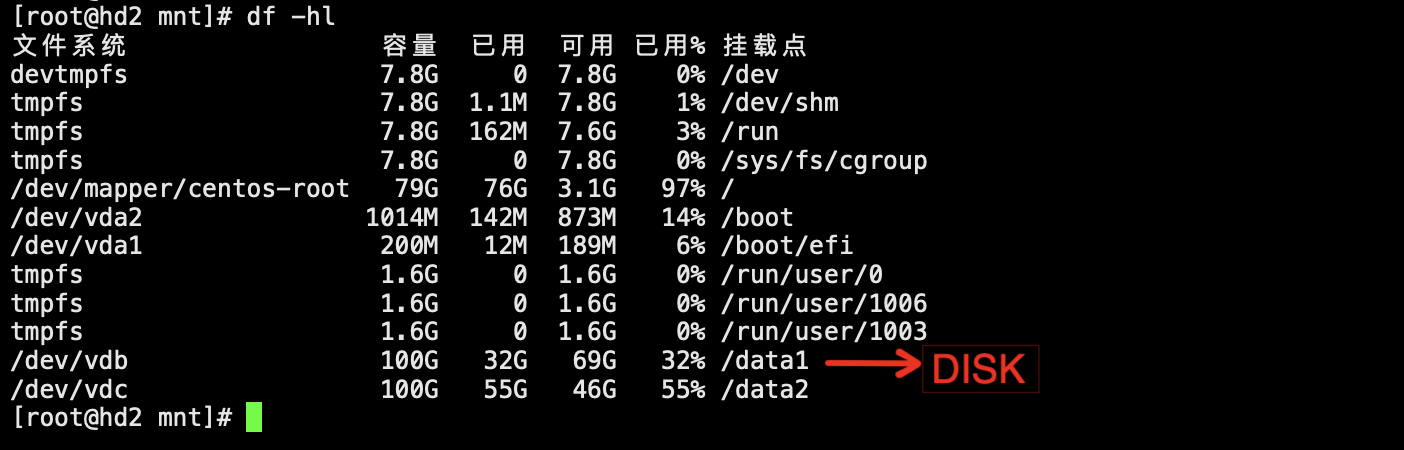

未提交作业前磁盘空间的容量

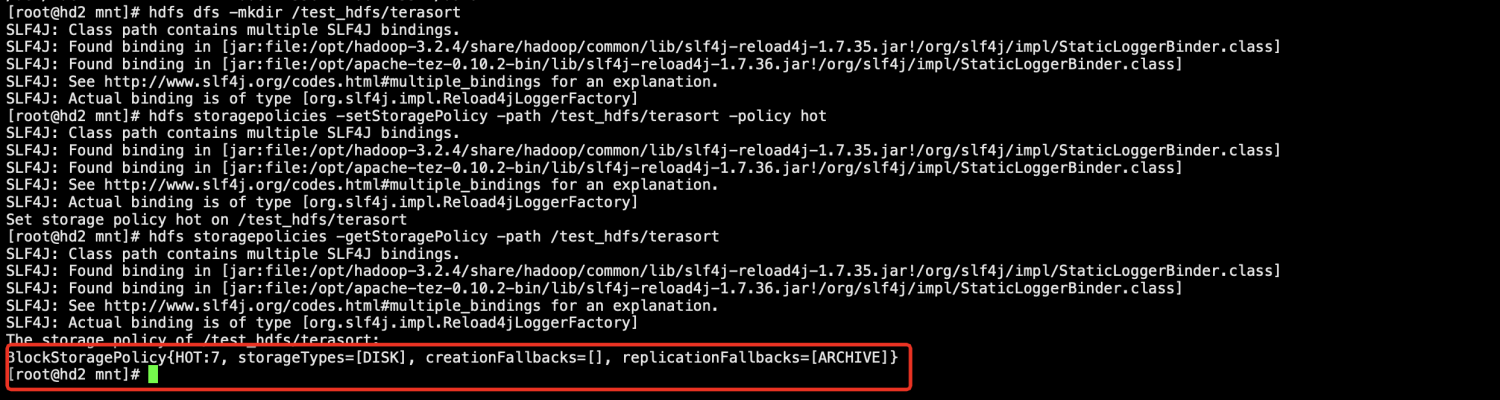

设置提交terasort任务的HDFS数据目录的策略为hot

[root@hd2 mnt]# hdfs dfs -mkdir /test_hdfs/terasort

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

[root@hd2 mnt]# hdfs storagepolicies -setStoragePolicy -path /test_hdfs/terasort -policy hot

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

Set storage policy hot on /test_hdfs/terasort

[root@hd2 mnt]# hdfs storagepolicies -getStoragePolicy -path /test_hdfs/terasort

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

The storage policy of /test_hdfs/terasort:

BlockStoragePolicy{HOT:7, storageTypes=[DISK], creationFallbacks=[], replicationFallbacks=[ARCHIVE]}

[root@hd2 mnt]#

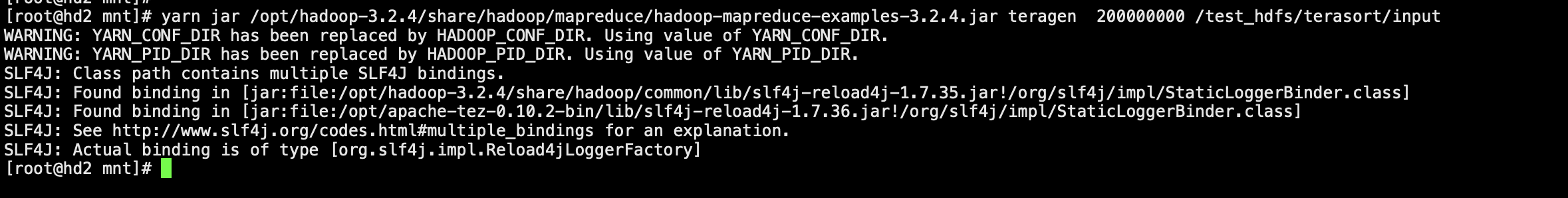

执行生成数据的脚本,生成20GB测试数据

[root@hd2 mnt]# yarn jar /opt/hadoop-3.2.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.4.jar teragen 200000000 /test_hdfs/terasort/input WARNING: YARN_CONF_DIR has been replaced by HADOOP_CONF_DIR. Using value of YARN_CONF_DIR. WARNING: YARN_PID_DIR has been replaced by HADOOP_PID_DIR. Using value of YARN_PID_DIR. SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

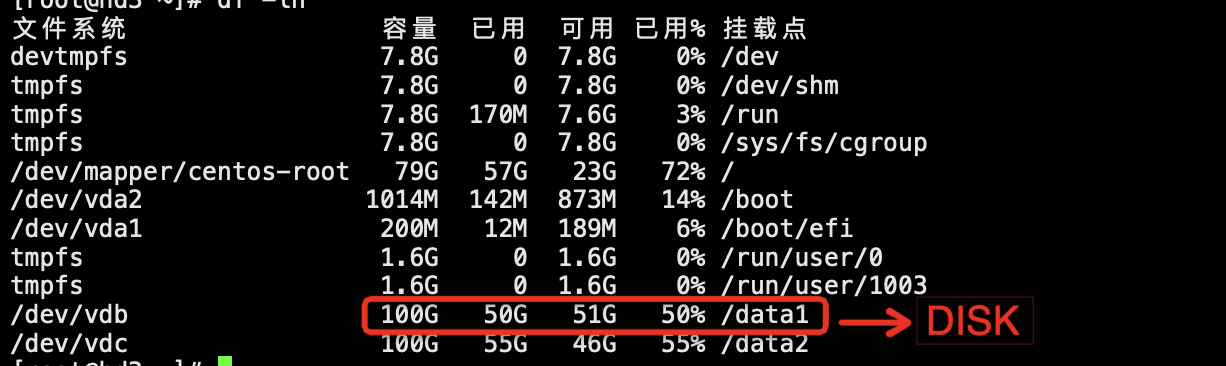

生成数据后查看磁盘,只有DISK存储的目录数据增长了

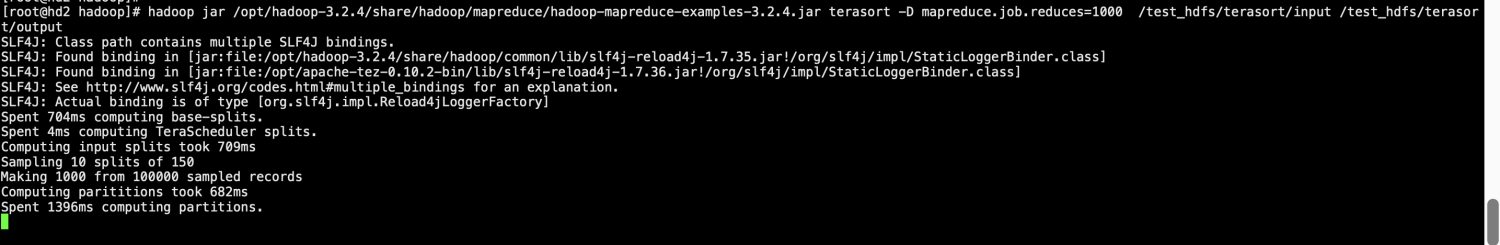

提交terasort任务

[root@hd2 hadoop]# hadoop jar /opt/hadoop-3.2.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.4.jar terasort -D mapreduce.job.reduces=1000 /test_hdfs/terasort/input /test_hdfs/terasort/output SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory] Spent 704ms computing base-splits. Spent 4ms computing TeraScheduler splits. Computing input splits took 709ms Sampling 10 splits of 150 Making 1000 from 100000 sampled records Computing parititions took 682ms Spent 1396ms computing partitions.

terasort任务完成后查看磁盘,发现只有DISK存储的目录数据增长了

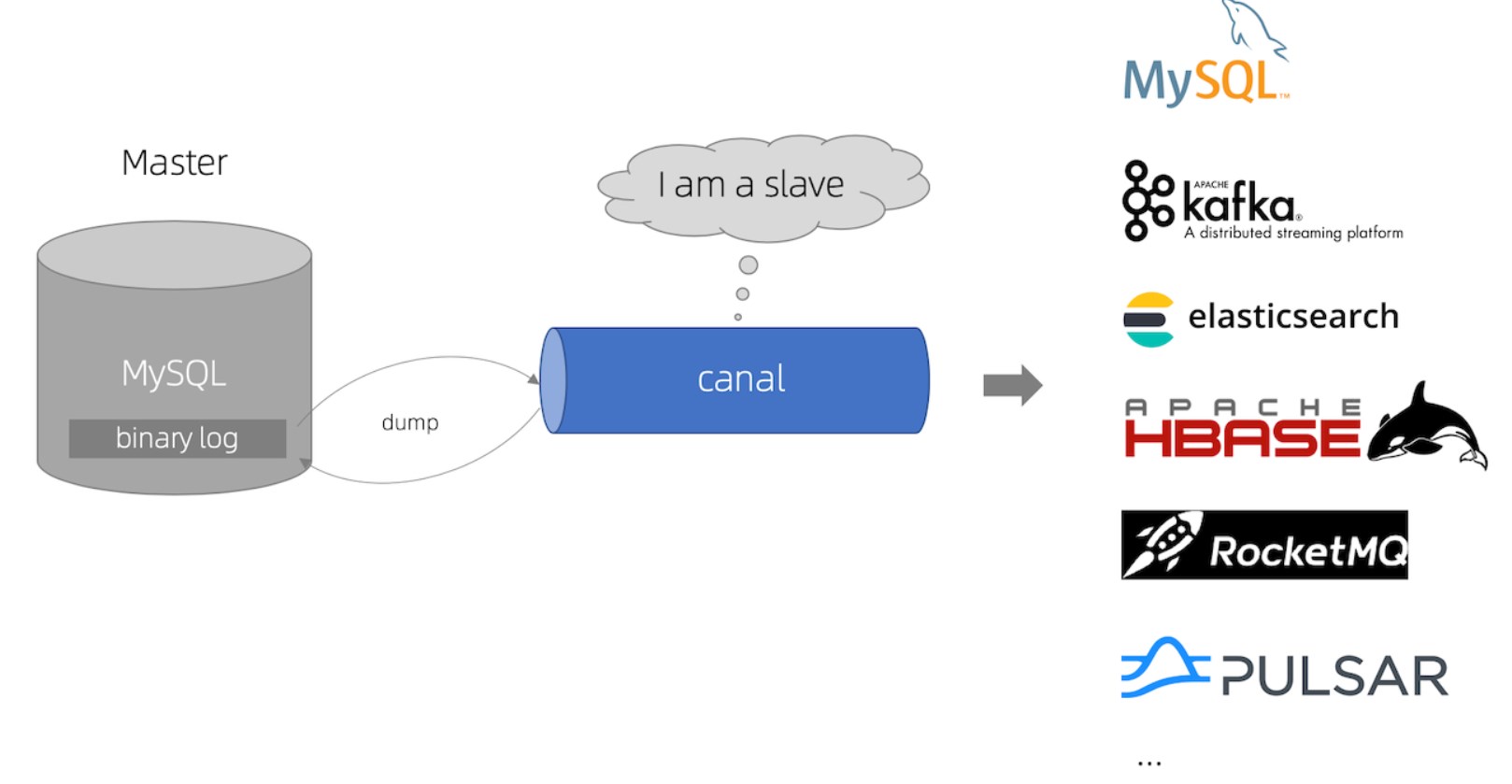

5、总结

1.对HDFS的数据目录进行配置,配置上每块盘的存储类型,然后在使用HDFS时,对相应的HDFS指定存储策略,这样就可以让指定的数据存储到对应存储类型的磁盘,实现HDFS的分层存储。

2.在使用HDFS分层存储时需要注意对数据的分配,对于使用频繁的数据,可以存放在SSD上,对于归档的数据可以存放到ARCHIVE类型的磁盘,对于一些常用的基本数据可以存放在DISK类型的磁盘,对数据进行合理的分配,可以让所有磁盘的性能得到最好的发挥,同时可以获得最高的性价比。

6、脚本

#!/bin/bash

hdfs_conf_dir="/opt/hadoop/etc/hadoop"

log_file="/var/log/hdfs_script.log"

set -e

print_help() {

echo "Usage: $0 <action> <directory> <disk_prefix>"

echo "Actions:"

echo " set_storage_policy <directory> <storage_type>"

echo " unset_storage_policy <directory>"

echo " modify_data_node_dir <disk_prefix>"

}

backup_file() {

local file=$1

cp "$file" "$file.bak"

echo "Backup created: $file.bak"

}

log() {

local message=$1

printf "%s %s\n" "$(date '+%Y-%m-%d %H:%M:%S')" "$message" >> "$log_file"

}

# 主要操作函数

set_storage_policy() {

local directory=$1

local storage_type=$2

hdfs storagepolicies -setStoragePolicy -path "$directory" -policy "$storage_type"

log "Storage policy for $directory set to $storage_type"

}

unset_storage_policy() {

local directory=$1

hdfs storagepolicies -unsetStoragePolicy -path "$directory"

log "Storage policy for $directory unset"

}

modify_data_node_dir() {

local disk_type=$1

# 备份文件

backup_file "$hdfs_conf_dir/hdfs-site.xml"

# 修改文件

#sed -i -e "/<property>/,/<\/property>/ s|\(<value>\)\(.*\)$directory|\1[$disk_type]\2$directory,|" "$hdfs_conf_dir/hdfs-site.xml"

#sed -i "s|$directory|[$disk_type]&|g" "$hdfs_conf_dir/hdfs-site.xml"

#sed -i "s|file:///\([^,]*\)|[$disk_type]file:///\1|g" "$hdfs_conf_dir/hdfs-site.xml"

sed -i "/<name>dfs.datanode.data.dir<\/name>/{n;s|\(file:///\)|[$disk_type]\1|g}" "$hdfs_conf_dir/hdfs-site.xml"

echo log "dfs.datanode.data.dir updated for $disk_type"

}

# 检查参数数量

if [ "$#" -lt 2 ]; then

print_help

exit 1

fi

action=$1

directory=$2

disk_prefix=$3

case "$action" in

"set_storage_policy")

storage_type=$3

set_storage_policy "$directory" "$storage_type"

;;

"unset_storage_policy")

unset_storage_policy "$directory"

;;

"modify_data_node_dir")

disk_prefix=$2

disk_type=$(echo "$disk_prefix" | tr -d '[]')

modify_data_node_dir "$disk_type"

;;

*)

echo "Invalid argument. Supported arguments are: set_storage_policy, unset_storage_policy, modify_data_node_dir"

print_help

exit 1

;;

esac批量设置存储策略为COLD:

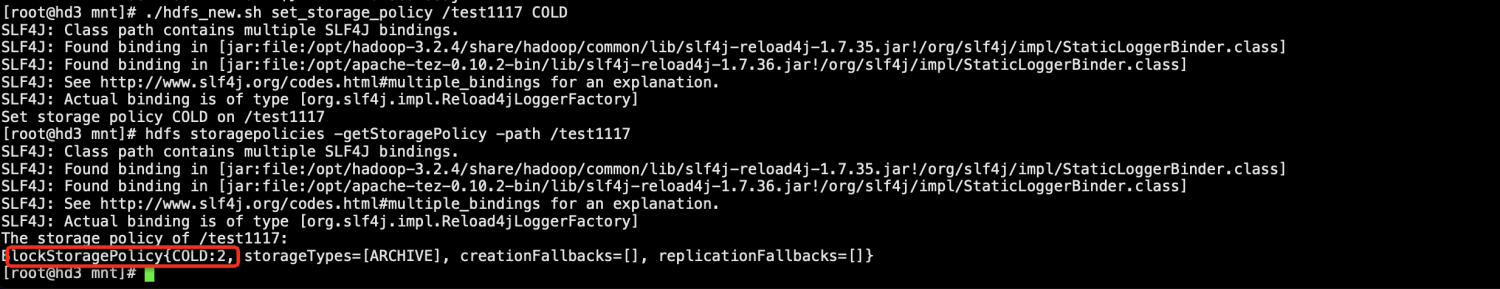

./hdfs_new.sh set_storage_policy /test1117 COLD

[root@hd3 mnt]# ./hdfs_new.sh set_storage_policy /test1117 COLD

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

Set storage policy COLD on /test1117

[root@hd3 mnt]# hdfs storagepolicies -getStoragePolicy -path /test1117

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/hadoop-3.2.4/share/hadoop/common/lib/slf4j-reload4j-1.7.35.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apache-tez-0.10.2-bin/lib/slf4j-reload4j-1.7.36.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Reload4jLoggerFactory]

The storage policy of /test1117:

BlockStoragePolicy{COLD:2, storageTypes=[ARCHIVE], creationFallbacks=[], replicationFallbacks=[]}

[root@hd3 mnt]#

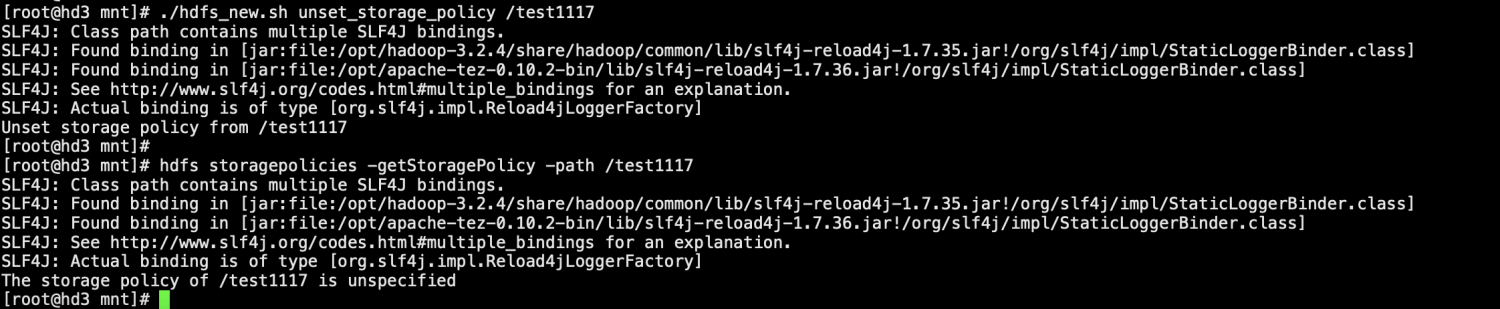

批量取消存储策略:

./hdfs_new.sh unset_storage_policy /test1117

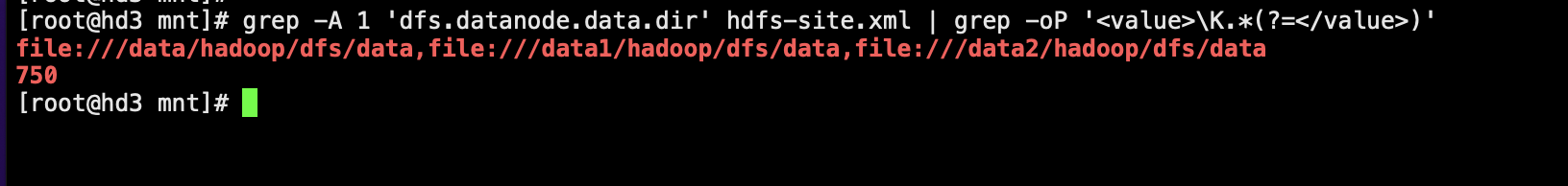

3.修改hdfs-site.xml添加磁盘标签

hdfs-site.xml配置修改之前:

[root@hd3 mnt]# ./hdfs_new.sh modify_data_node_dir SSD Backup created: /mnt/hdfs-site.xml.bak log dfs.datanode.data.dir updated for SSD [root@hd3 mnt]#

hdfs-site.xml配置修改之后:

[root@hd3 mnt]# ./hdfs_new.sh modify_data_node_dir SSD Backup created: /mnt/hdfs-site.xml.bak log dfs.datanode.data.dir updated for SSD