开源大数据集群部署(二十一)Spark on yarn 部署

3.0.1 spark on yarn安装(每个节点)

cd /root/bigdata/ tar -xzvf spark-3.3.1-bin-hadoop3.tgz -C /opt/ ln -s /opt/spark-3.3.1-bin-hadoop3 /opt/spark chown -R spark:spark /opt/spark-3.3.1-bin-hadoop3

3.0.2 配置环境变量及修改配置

cat /etc/profile.d/bigdata.sh export SPARK_HOME=/opt/spark export SPARK_CONF_DIR=/opt/spark/conf

引用变量

source /etc/profile

yarn的capacity-scheduler.xml文件修改配置保证资源调度按照CPU + 内存模式:(每个yarn 节点)

<property> <name>yarn.scheduler.capacity.resource-calculator</name> <!-- <value>org.apache.hadoop.yarn.util.resource.DefaultResourceCalculator</value> --> <value>org.apache.hadoop.yarn.util.resource.DominantResourceCalculator</value> </property>

在yarn-site.xml开启日志功能:

<property> <description>Whether to enable log aggregation</description> <name>yarn.log-aggregation-enable</name> <value>true</value> </property> <property> <name>yarn.log.server.url</name> <value>http://master:19888/jobhistory/logs</value> </property>

修改mapred-site.xml: (每个yarn节点)

<property> <name>mapreduce.jobhistory.address</name> <value>hd1.dtstack.com:10020</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>hd1.dtstack.com:19888</value> </property>

cd /opt/spark/conf

Spark 配置文件 (每个spark节点)

cat spark-defaults.conf spark.eventLog.dir=hdfs:///user/spark/applicationHistory spark.eventLog.enabled=true spark.yarn.historyServer.address=http://hd1.dtstack.com:18018 spark.history.kerberos.enabled=true spark.history.kerberos.principal=hdfs/hd1.dtstack.com@DTSTACK.COM spark.history.kerberos.keytab=/etc/security/keytab/hdfs.keytab

Spark 环境配置文件 (每个spark节点)

cat spark-env.sh

export HADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

export SPARK_HISTORY_OPTS="-Dspark.history.ui.port=18018 -Dspark.history.fs.logDirectory=hdfs:///user/spark/applicationHistory"

export YARN_CONF_DIR=${HADOOP_HOME}/etc/hadoop

Ø 由于需要读取日志文件,所以使用hdfs的keytab

创建对应hdfs目录,并修改权限

hdfs dfs -mkdir -p /user/spark/applicationHistory hdfs dfs -chown -R spark /user/spark/

提交测试任务

cd /opt/spark ./bin/spark-submit --master yarn --deploy-mode client --class org.apache.spark.examples.SparkPi examples/jars/spark-examples_2.12-3.3.1.jar

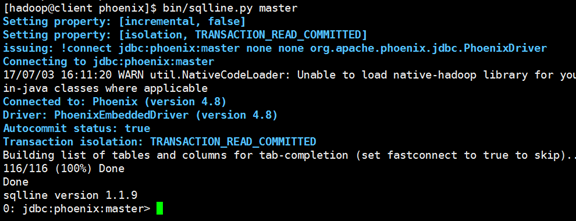

3.0.3 启动spark history server

cd /opt/spark

开启history server

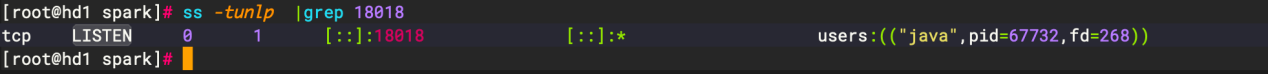

./sbin/start-history-server.sh

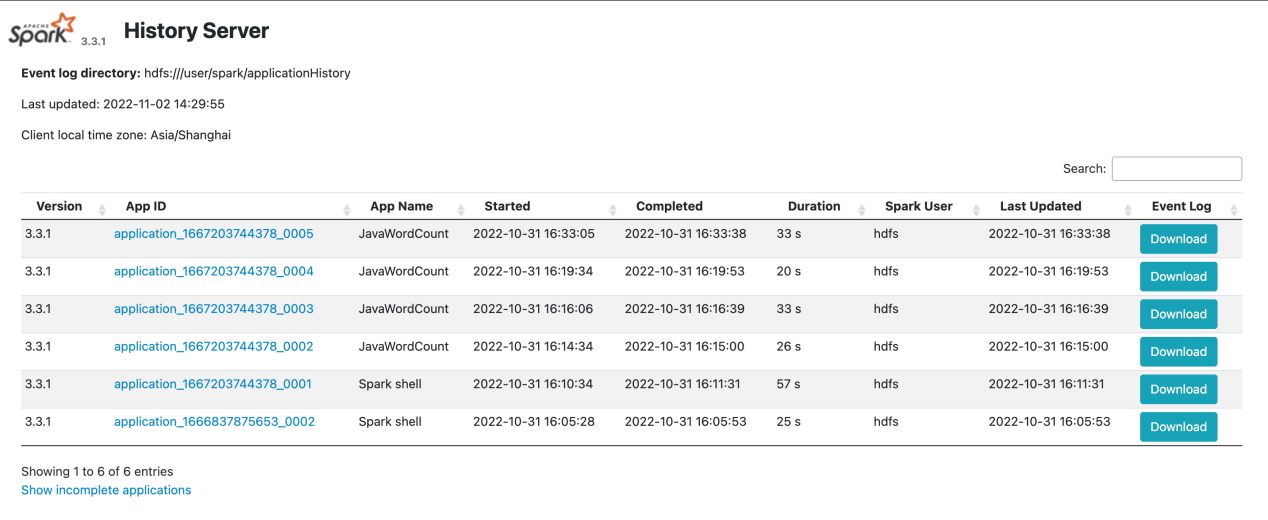

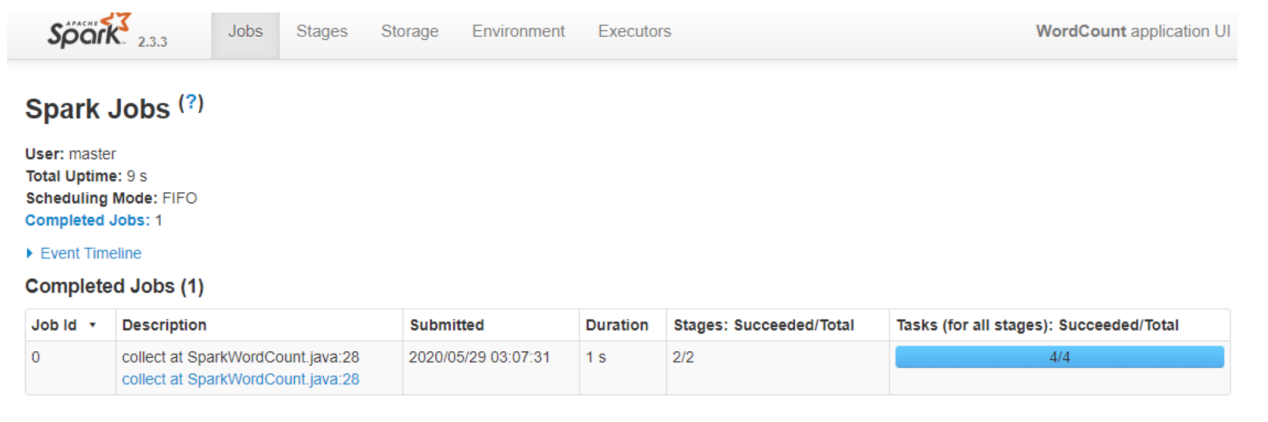

3.0.4 查看效果

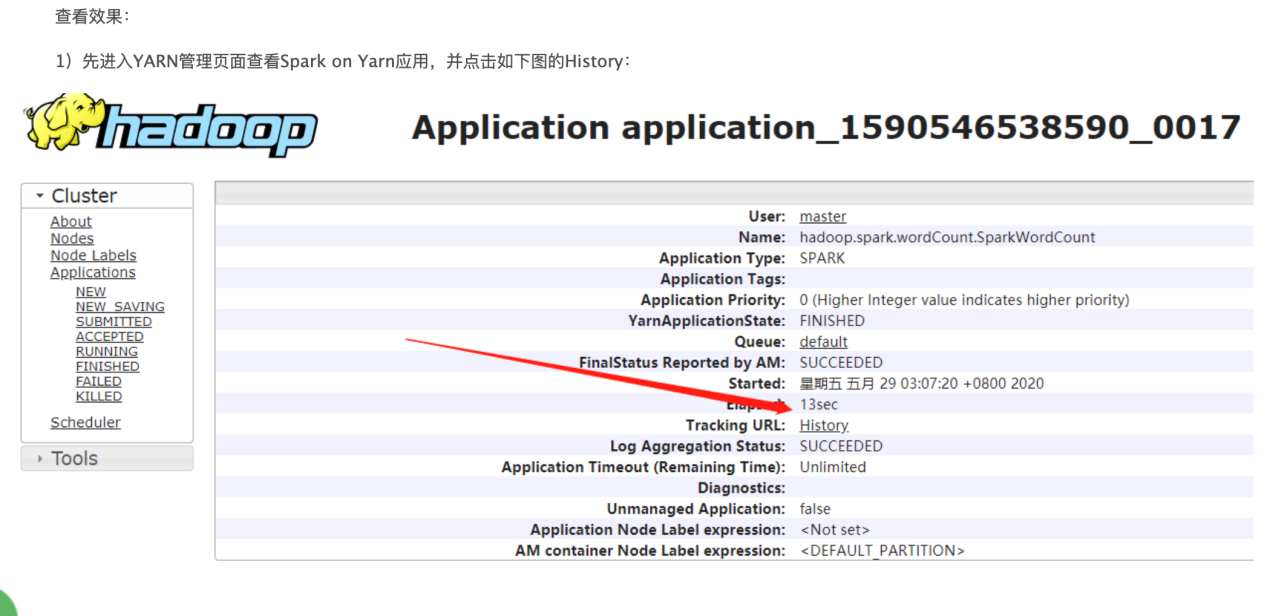

1)先进入YARN管理页面查看Spark on Yarn应用,并点击如下图的History:

直接访问histroy server

http://ip:18018